The Internet is an ever-evolving virtual universe with over 1.1 billion websites.

Do you think that Google can crawl every website in the world?

Even with all the resources, money, and data centers that Google has, it cannot even crawl the entire web – nor does it want to.

What Is Crawl Budget, And Is It Important?

Crawl budget refers to the amount of time and resources that Googlebot spends on crawling web pages in a domain.

It is important to optimize your site so Google will find your content faster and index your content, which could help your site get better visibility and traffic.

If you have a large site that has millions of web pages, it is particularly important to manage your crawl budget to help Google crawl your most important pages and get a better understanding of your content.

Google states that:

If your site does not have a large number of pages that change rapidly, or if your pages seem to be crawled the same day that they are published, keeping your sitemap up to date and checking your index coverage regularly is enough. Google also states that each page must be reviewed, consolidated and assessed to determine where it will be indexed after it has crawled.

Crawl budget is determined by two main elements: crawl capacity limit and crawl demand.

Crawl demand is how much Google wants to crawl on your website. More popular pages, i.e., a popular story from CNN and pages that experience significant changes, will be crawled more.

Googlebot wants to crawl your site without overwhelming your servers. To prevent this, Googlebot calculates a crawl capacity limit, which is the maximum number of simultaneous parallel connections that Googlebot can use to crawl a site, as well as the time delay between fetches.

Taking crawl capacity and crawl demand together, Google defines a site’s crawl budget as the set of URLs that Googlebot can and wants to crawl. Even if the crawl capacity limit is not reached, if crawl demand is low, Googlebot will crawl your site less.

Here are the top 12 tips to manage crawl budget for large to medium sites with 10k to millions of URLs.

1. Determine What Pages Are Important And What Should Not Be Crawled

Determine what pages are important and what pages are not that important to crawl (and thus, Google visits less frequently).

Once you determine that through analysis, you can see what pages of your site are worth crawling and what pages of your site are not worth crawling and exclude them from being crawled.

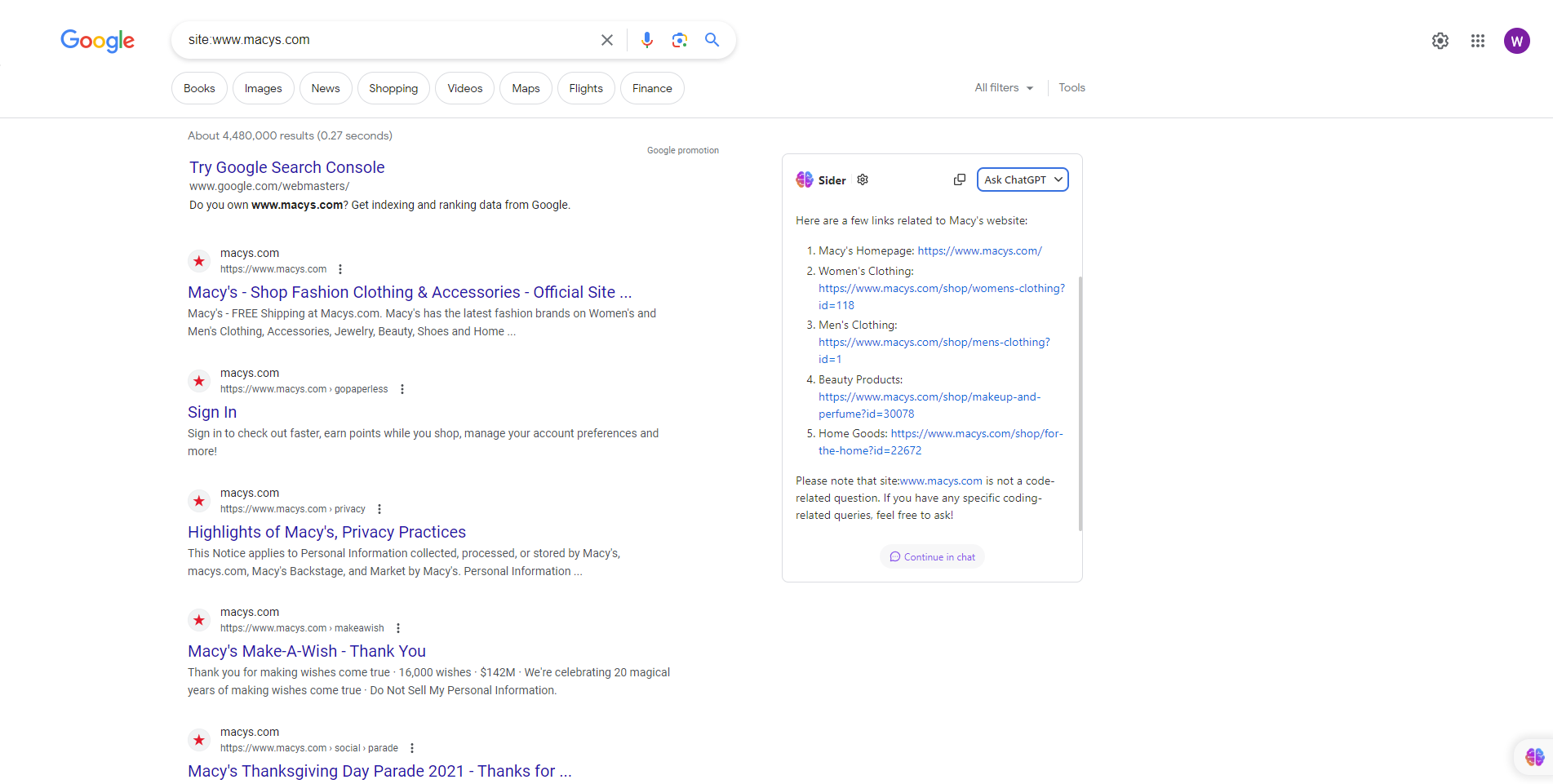

For example, Macys.com has over 2 million pages that are indexed.

Screenshot from search for [site: macys.com], Google, June 2023

Screenshot from search for [site: macys.com], Google, June 2023It manages its crawl budget by informing Google not to crawl certain pages on the site because it restricted Googlebot from crawling certain URLs in the robots.txt file.

Googlebot may decide it is not worth its time to look at the rest of your site or increase your crawl budget. Make sure that Faceted navigation and session identifiers: are blocked via robots.txt

2. Manage Duplicate Content

While Google does not issue a penalty for having duplicate content, you want to provide Googlebot with original and unique information that satisfies the end user’s information needs and is relevant and useful. Make sure that you are using the robots.txt file.

Google stated not to use no index, as it will still request but then drop.

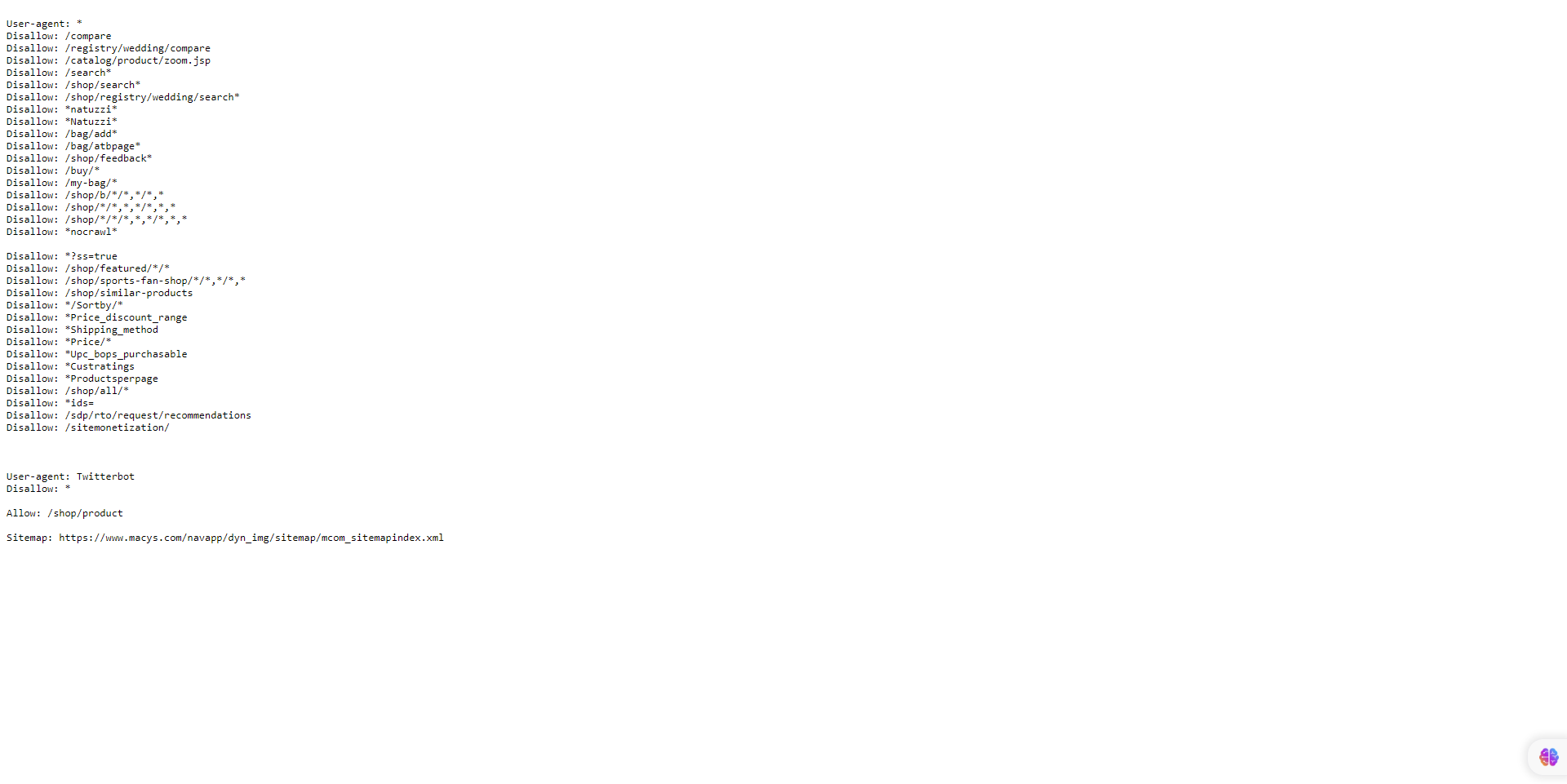

3. Block Crawling Of Unimportant URLs Using Robots.txt And Tell Google What Pages It Can Crawl

For an enterprise-level site with millions of pages, Google recommends blocking the crawling of unimportant URLs using robots.txt.

Also, you want to ensure that your important pages, directories that hold your golden content, and money pages are allowed to be crawled by Googlebot and other search engines.

Screenshot from author, June 2023

Screenshot from author, June 20234. Long Redirect Chains

Keep your number of redirects to a small number if you can. Having too many redirects or redirect loops can confuse Google and reduce your crawl limit.

Google states that long redirect chains can have a negative effect on crawling.

5. Use HTML

Using HTML increases the odds of a crawler from any search engine visiting your website.

While Googlebots have improved when it comes to crawling and indexing JavaScript, other search engine crawlers are not as sophisticated as Google and may have issues with other languages other than HTML.

6. Make Sure Your Web Pages Load Quickly And Offer A Good User Experience

Make your site is optimized for Core Web Vitals.

The quicker your content loads – i.e., under three seconds – the quicker Google can provide information to end users. If they like it, Google will keep indexing your content because your site will demonstrate Google crawl health, which can make your crawl limit increase.

7. Have Useful Content

According to Google, content is rated by quality, regardless of age. Create and update your content as necessary, but there is no additional value in making pages artificially appear to be fresh by making trivial changes and updating the page date.

If your content satisfies the needs of end users and, i.e., helpful and relevant, whether it is old or new does not matter.

If users do not find your content helpful and relevant, then I recommend that you update and refresh your content to be fresh, relevant, and useful and promote it via social media.

Also, link your pages directly to the home page, which may be seen as more important and crawled more often.

8. Watch Out For Crawl Errors

If you have deleted some pages on your site, ensure the URL returns a 404 or 410 status for permanently removed pages. A 404 status code is a strong signal not to crawl that URL again.

Blocked URLs, however, will stay part of your crawl queue much longer and will be recrawled when the block is removed.

- Also, Google states to remove any soft 404 pages, which will continue to be crawled and waste your crawl budget. To test this, go into GSC and review your Index Coverage report for soft 404 errors.

If your site has many 5xx HTTP response status codes (server errors) or connection timeouts signal the opposite, crawling slows down. Google recommends paying attention to the Crawl Stats report in Search Console and keeping the number of server errors to a minimum.

By the way, Google does not respect or adhere to the non-standard “crawl-delay” robots.txt rule.

Even if you use the nofollow attribute, the page can still be crawled and waste the crawl budget if another page on your site, or any page on the web, does not label the link as nofollow.

9. Keep Sitemaps Up To Date

XML sitemaps are important to help Google find your content and can speed things up.

It is extremely important to keep your sitemap URLs up to date, use the <lastmod> tag for updated content, and follow SEO best practices, including but not limited to the following.

- Only include URLs you want to have indexed by search engines.

- Only include URLs that return a 200-status code.

- Make sure a single sitemap file is less than 50MB or 50,000 URLs, and if you decide to use multiple sitemaps, create an index sitemap that will list all of them.

- Make sure your sitemap is UTF-8 encoded.

- Include links to localized version(s) of each URL. (See documentation by Google.)

- Keep your sitemap up to date, i.e., update your sitemap every time there is a new URL or an old URL has been updated or deleted.

10. Build A Good Site Structure

Having a good site structure is important for your SEO performance for indexing and user experience.

Site structure can affect search engine results pages (SERP) results in a number of ways, including crawlability, click-through rate, and user experience.

Having a clear and linear structure of your site can use your crawl budget efficiently, which will help Googlebot find any new or updated content.

Always remember the three-click rule, i.e., any user should be able to get from any page of your site to another with a maximum of three clicks.

11. Internal Linking

The easier you can make it for search engines to crawl and navigate through your site, the easier crawlers can identify your structure, context, and important content.

Having internal links pointing to a web page can inform Google that this page is important, help establish an information hierarchy for the given website, and can help spread link equity throughout your site.

12. Always Monitor Crawl Stats

Always review and monitor GSC to see if your site has any issues during crawling and look for ways to make your crawling more efficient.

You can use the Crawl Stats report to see if Googlebot has any issues crawling your site.

If availability errors or warnings are reported in GSC for your site, look for instances in the host availability graphs where Googlebot requests exceeded the red limit line, click into the graph to see which URLs were failing, and try to correlate those with issues on your site.

Also, you can use the URL Inspection Tool to test a few URLs on your site.

If the URL inspection tool returns host load warnings, that means that Googlebot cannot crawl as many URLs from your site as it discovered.

Wrapping Up

Crawl budget optimization is crucial for large sites due to their extensive size and complexity.

With numerous pages and dynamic content, search engine crawlers face challenges in efficiently and effectively crawling and indexing the site’s content.

By optimizing your crawl budget, site owners can prioritize the crawling and indexing of important and updated pages, ensuring that search engines spend their resources wisely and effectively.

This optimization process involves techniques such as improving site architecture, managing URL parameters, setting crawl priorities, and eliminating duplicate content, leading to better search engine visibility, improved user experience, and increased organic traffic for large websites.

More resources:

Featured Image: BestForBest/Shutterstock