It’s a common belief that AI-generated blog posts are inherently low-quality and inferior to their human-made equivalents.

Companies that scale AI-generated content do so with the knowledge that they are making a trade-off, we believe, choosing speed and scale at the expense of quality. We agree that AI is faster than any human, and it makes a passing first draft, but we know we are still sacrificing something important by using it.

I now think this belief is outdated. I think we have reached the point where generative AI can create content indistinguishable from the vast corpus of human-written content produced by content marketers, like me, in previous years.

AI has become a more thorough researcher, a more compliant adherent to brand and voice guidelines, more flexible in its response to feedback, faster, and more efficient. There is no longer a trade-off inherent in using AI for content creation.

This is not to say that all AI content is, by default, good; only that the barriers that prevented AI content generation from being good have fallen. Access to world-class writing through LLMs is still uneven, but it will not stay this way for long. Functionally “perfect” AI content is just around the corner, for all of us, and it’s in our interest to acknowledge it.

Here’s why.

Many people believe there is some inherent quality within human writing that AI can never reach, some creative spark forever unreachable for our silicon counterparts.

I won’t claim that AI will ever approach the profundity of Shakespeare, but I will claim that “great writing” is simpler and more mechanical than most people assume. Most of the constituent parts of “great writing” are things that LLMs can do very, very well.

I have spent my entire career trying to become a better writer by introspecting into the writing process, asking why some things work and others don’t. I am not an expert in this field, but I have developed an effective worldview of the mechanics of writing, and a set of writing principles that I follow time and time again.

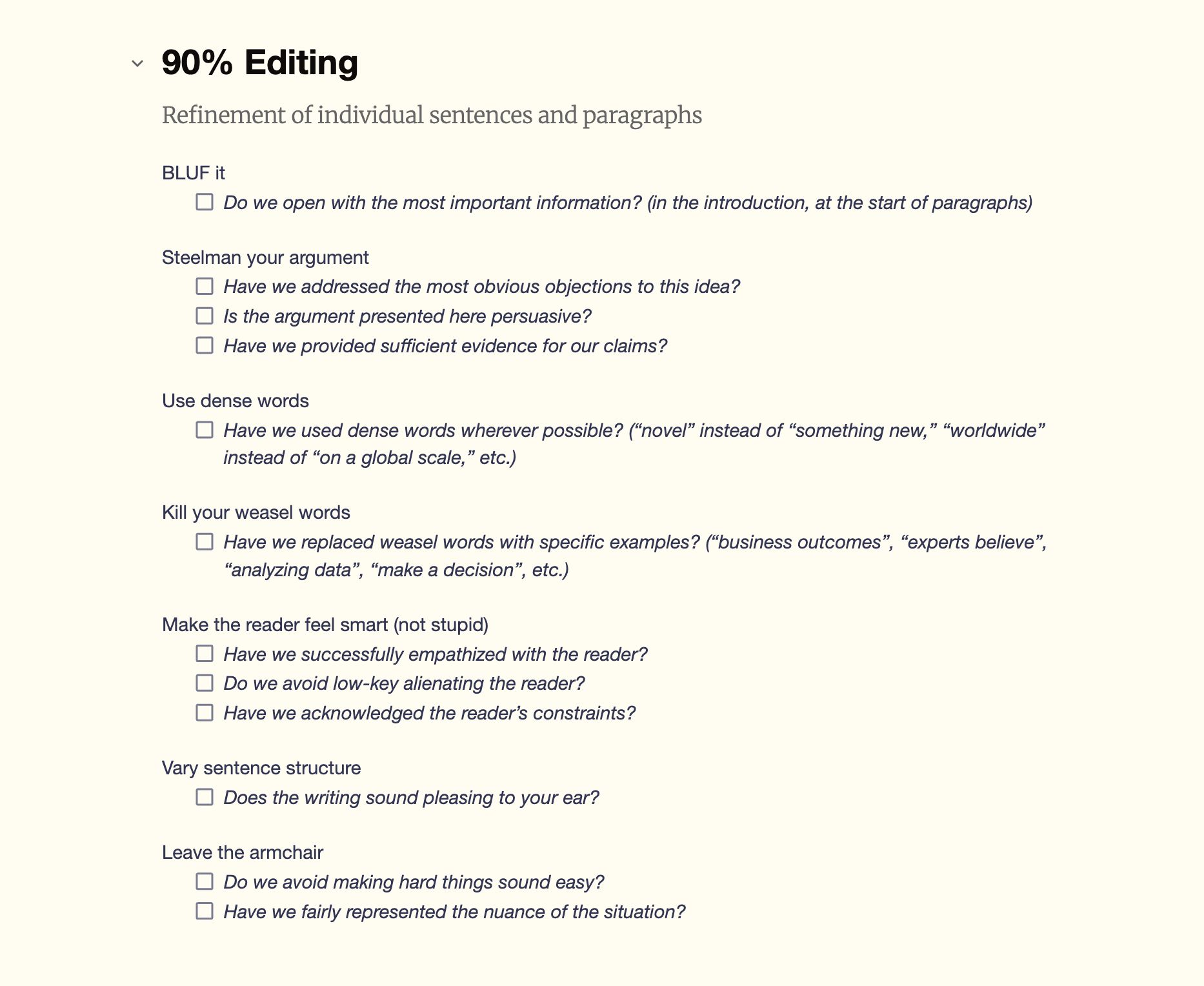

A small snapshot of my editing checklists for training new writers.

For example, here are some random excerpts from my editing checklist:

- Have we addressed the most obvious objections to this idea?

- Have we used dense words wherever possible? (“novel” instead of “something new,” “worldwide” instead of “on a global scale,” etc.)

- Have we replaced weasel words with specific examples? (“business outcomes”, “experts believe”, “analyzing data”, “make a decision”, etc.)

- Do we avoid making hard things sound easy?

- Do we open with the most important information? (in the introduction, at the start of paragraphs)

- etc.

These principles are how I write, how I edit writing, and how I teach writing. They are extremely simple, but executed in unison, they ladder up to something that ends up pretty regularly as good, even great, writing.

These principles are so simple, in fact, that an LLM can perform them perfectly—and usually better than I can. I often apply these principles unevenly, through fatigue or boredom or laziness. But for an LLM, these principles can be set up once and followed indefinitely. They can be scaled evenly to hundreds, thousands, millions of outputs, codified in system prompts and SKILL files (more on these in the next section).

If you acknowledge that a basic recipe exists for great writing (and I believe it does), LLMs can follow it very well. Chain many of these heuristics together in a reliable way, and you can build an exceptional AI writing process.

And, at last, we have the technology to enable that.

For many people, their worldview of AI is still anchored in the chat experience. But LLMs—and more importantly, the infrastructure that surrounds them—have progressed massively in recent months.

Even in their nascent days, large language models showed sparks of superhuman brilliance in small areas. But like a precocious child imitating its parents with no real understanding of its behaviour, it was hard to imagine these sparks progressing into a blaze of genuine writing ability.

A handful of coherent sentences seems a million miles away from reliably generating thousands of words of accurate, helpful, concise, on-brand writing; from identifying and filling topic gaps, understanding the dominant search intent, differentiating from competitor articles, and so on.

When I wrote about my previous AI writing process (using custom GPTs based on my editorial principles), I saw many sparks of brilliance in the output, but the final product still relied on human intervention to create.

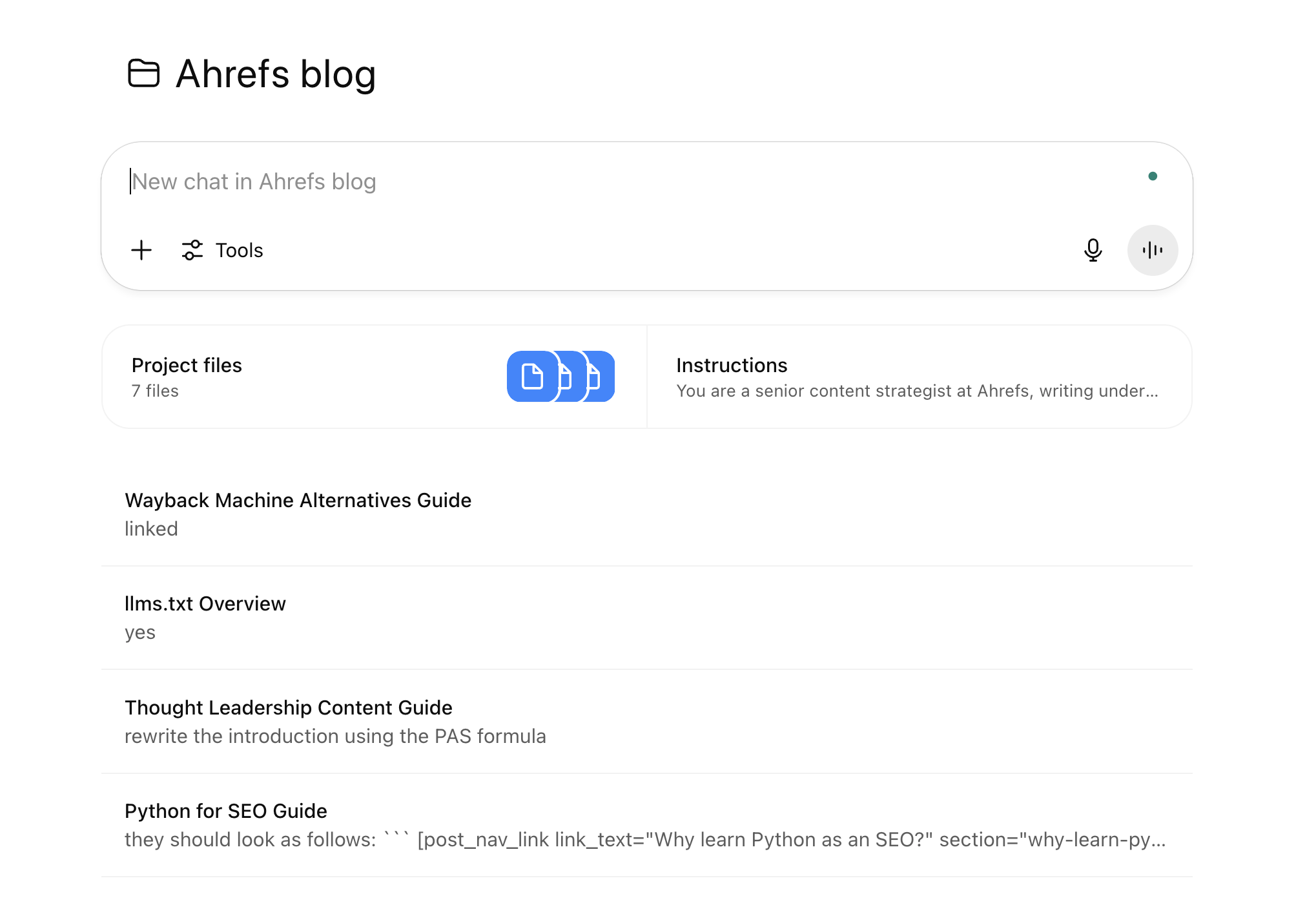

An early version of our AI content pipeline, built using ChatGPT projects and custom GPTs.

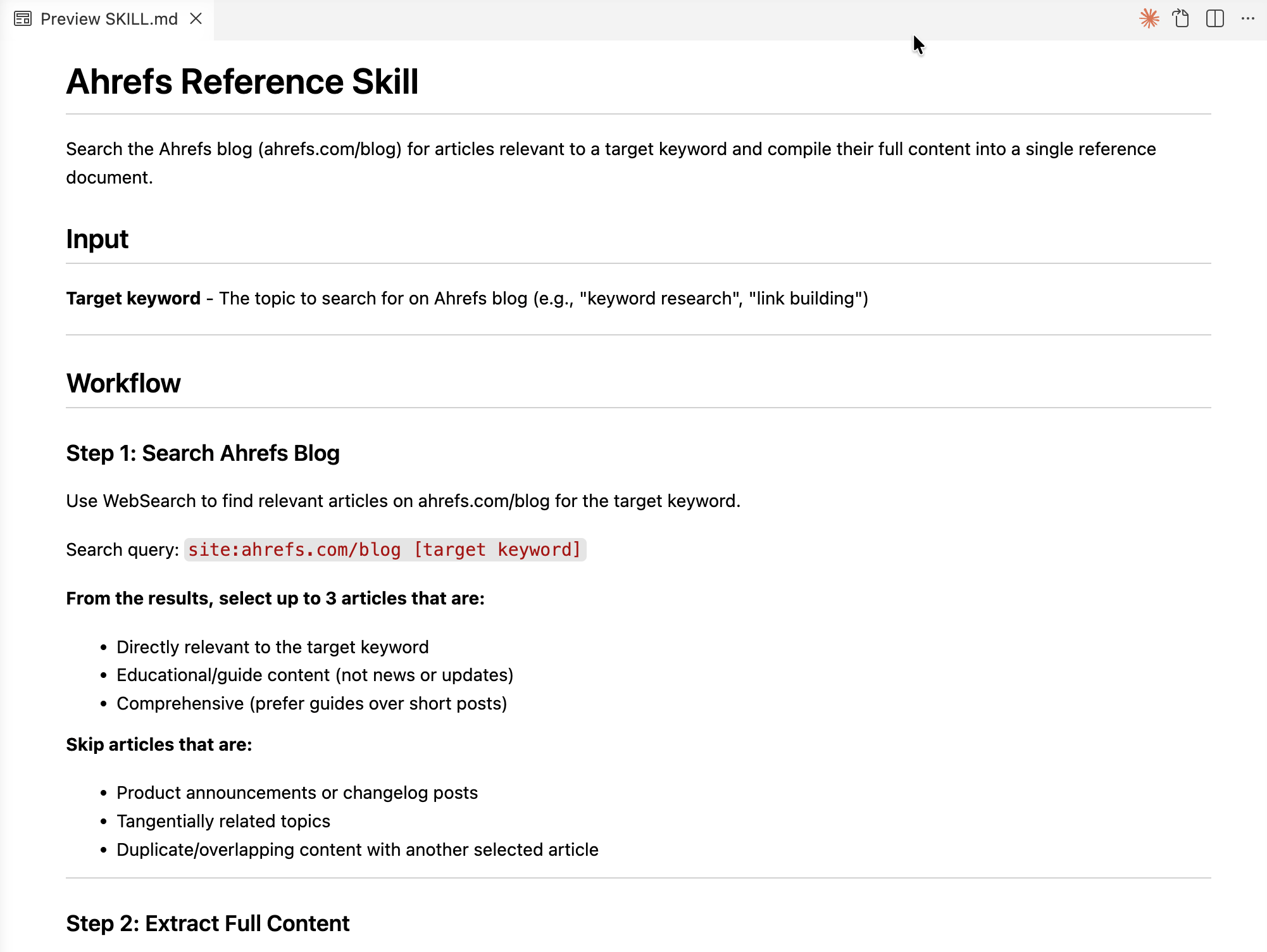

But that is no longer the case. Just seven months later, the limitations of that process have disappeared. Today, my $20/m Claude subscription provides access to abilities that seem almost science fiction. I can:

- Chain multiple LLM processes together into one continuous workflow (Claude Code, OpenAI Codex, and other agentic models).

- Provide guardrails to avoid much of the probabilistic “wobble” we see when LLMs usually try to follow processes (SKILLs) and encourage them to recursively benchmark their performance and self-improve.

- Integrate AI into existing workflows across other tools (MCP).

- Ground content in bodies of research, in samples of existing writing, tone of voice, brand guidelines (RAG, memory, context).

(And this ignores the significant improvements flagship models themselves have shown in recent years.)

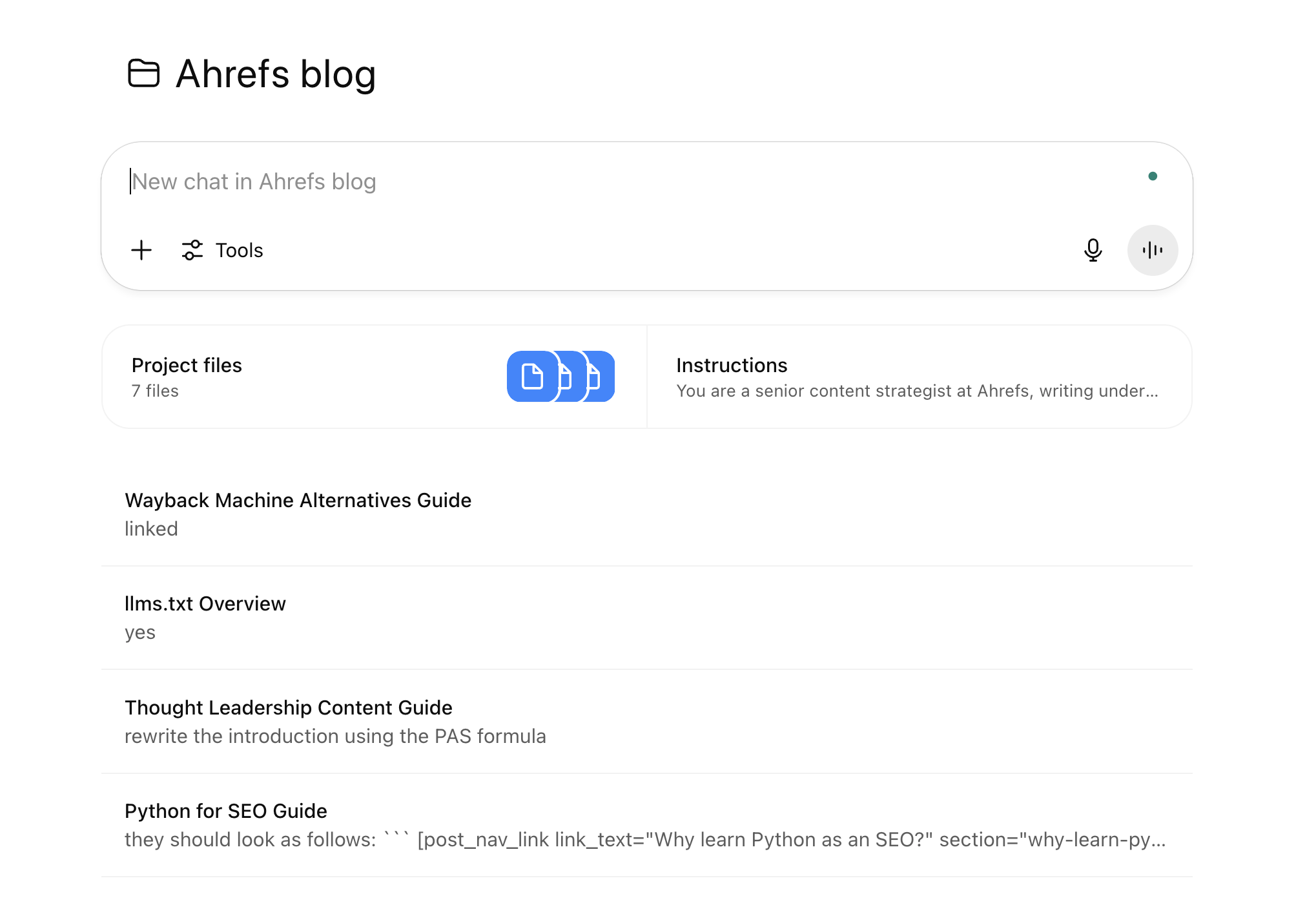

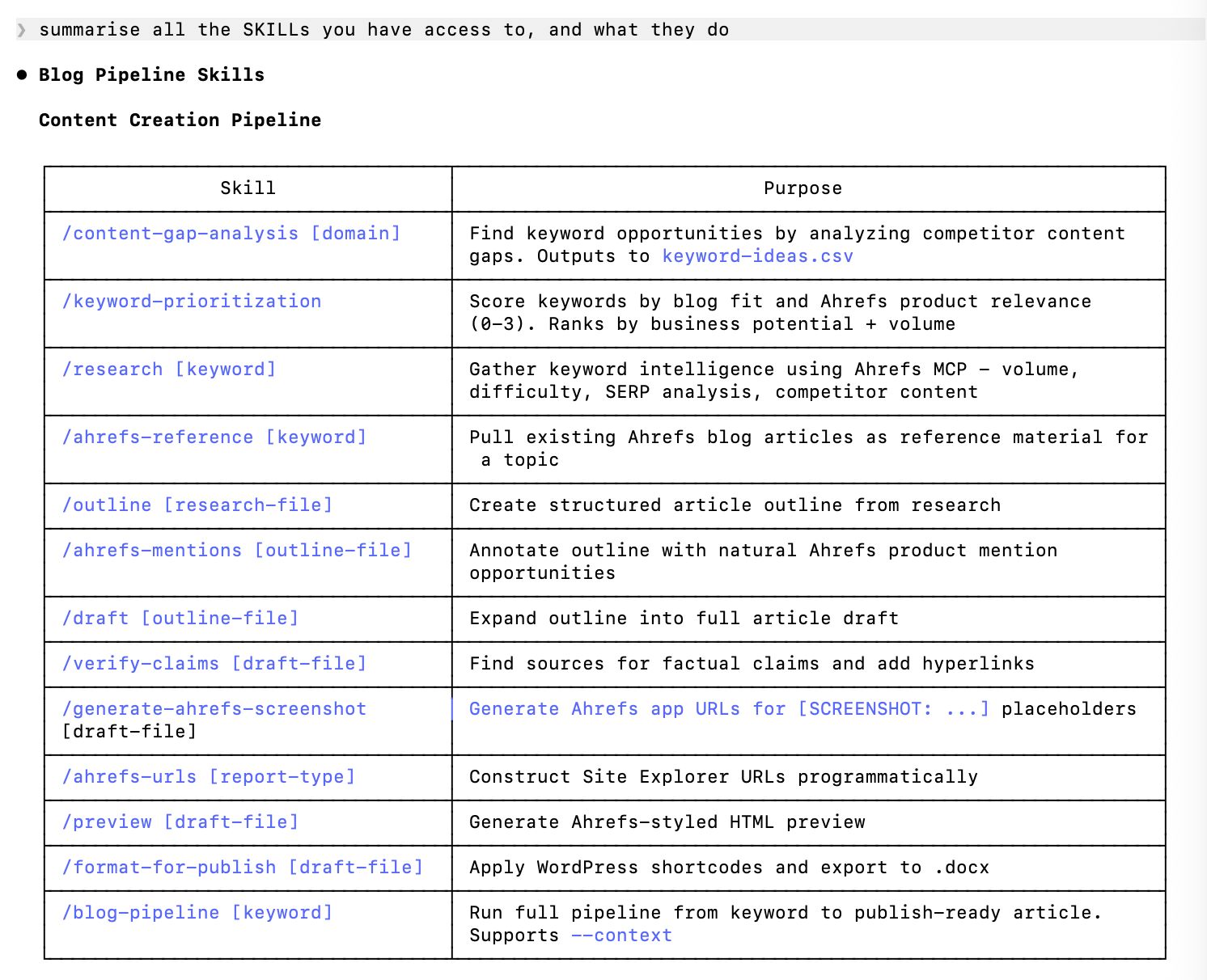

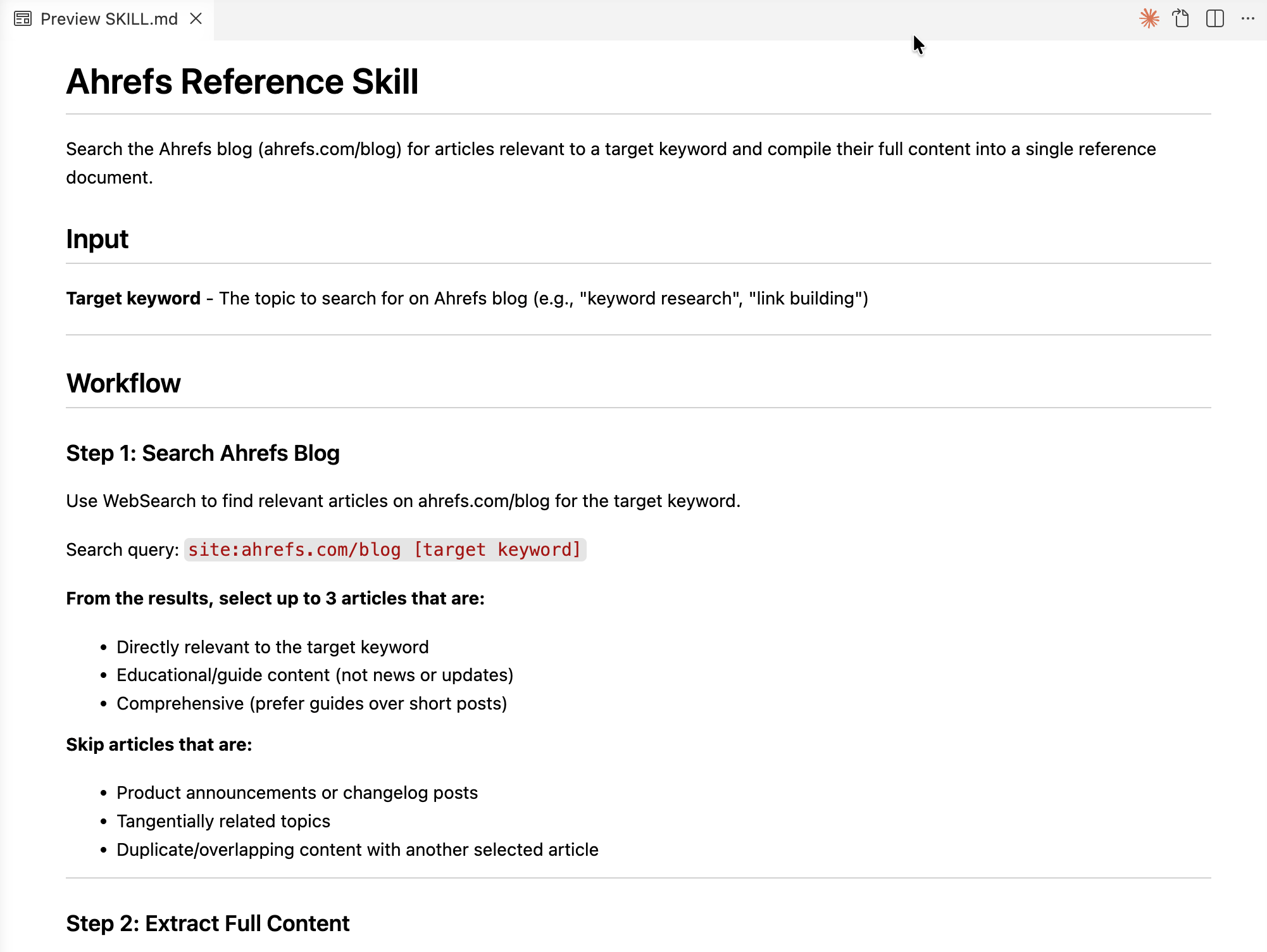

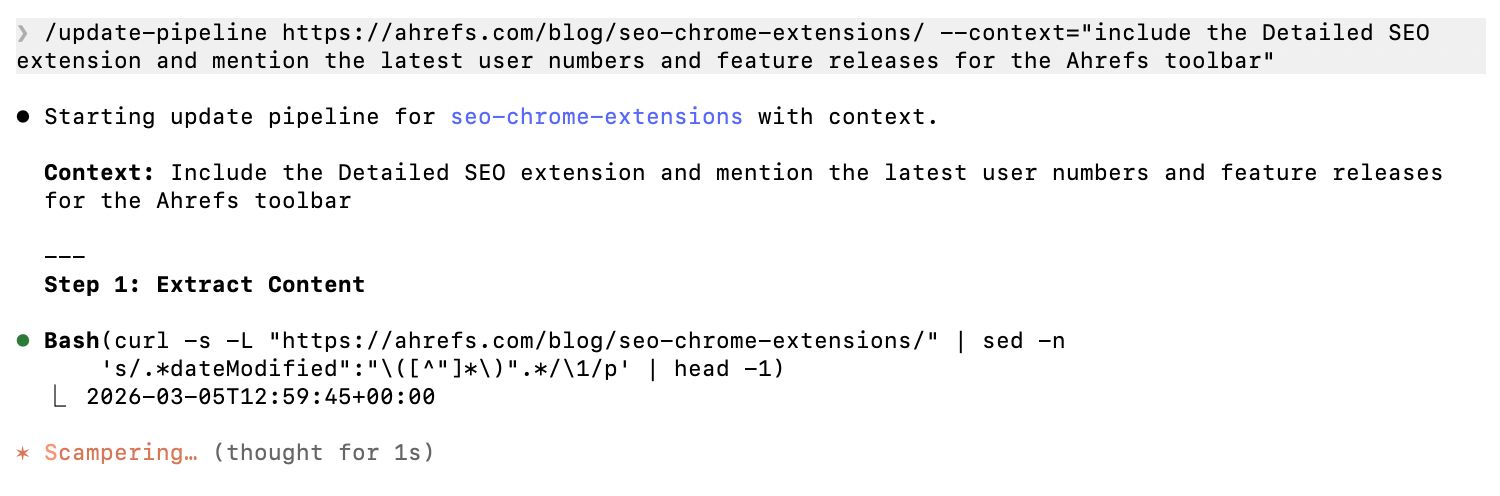

Some of the custom SKILL files we’ve built for Claude Code.

All the vibe-coding infrastructure developed in the past year has had a transformative impact on the usefulness of LLMs generally. LLMs are still “just” sophisticated autocompletes—and we have certainly not achieved AGI—but companies like Anthropic and OpenAI have succeeded in harnessing that behaviour in a way that seems much, much more useful than the sum of its parts.

And importantly, the task set before them—content marketing—is not particularly complicated.

Most content marketers spend most of their time creating informational, keyword-targeted content: helpful “how-to” articles or comparison lists. These are the time-tested archetypes of content marketing, and they are generally pretty straightforward to create.

Like before, I believe there is a basic recipe for effective search content. Here are some of the core principles that we try to follow in our search content:

- Address the primary search intent

- Build upon the consensus in the existing search results

- Fill any topic gaps obvious between your articles and your competitors’

- Add new, novel information above and beyond the existing results

- Reference any relevant existing content you have already created on the topic

- Reference any relevant external content that might help the reader continue their exploration

- Prioritise topics that allow you to naturally reference your product

- Ensure the article structure is mutually exclusive, collectively exhaustive

- Ensure the article structure actually delivers on the title’s promise

- Hook the reader’s interest with the title and introduction

- Include keywords and keyword variations naturally in important parts of the article

- etc.

These are similarly simple concepts that also ladder up to effective search content. If a person can follow these processes, their search content generally performs well. The same is true of an LLM. If Opus 4.6 or GPT 5.4 can follow these processes, their output will also perform well.

Even the most opaque of these processes is fairly trivial for an LLM to follow, either by providing explicit steps to take (“use WebFetch to run a site: search for ahrefs.com/blog and return the first three articles…”), examples of the desired output (like a reference file of your favourite article introductions), or access to trusted data sources (like the Ahrefs MCP).

A preview of a SKILL file for retrieving existing Ahrefs content for a given keyword.

As much as we might wish it otherwise, effective search content is intensely formulaic (hence the success of the skyscraper method). There is no need for great complexity or novelty, no need for poetry or disagreement with the SERP.

There is some room for innovation and experimentation, but less than you might assume: straying too far outside of the Overton window usually degrades performance, instead of improving it (I say that after many failed attempts to create “clever” search content).

If Claude can refactor a 100,000-line codebase, it seems arrogant to assume that large language models can’t write an excellent piece of search-optimized content. AI can’t write Shakespeare, but it doesn’t need to.

Final thoughts

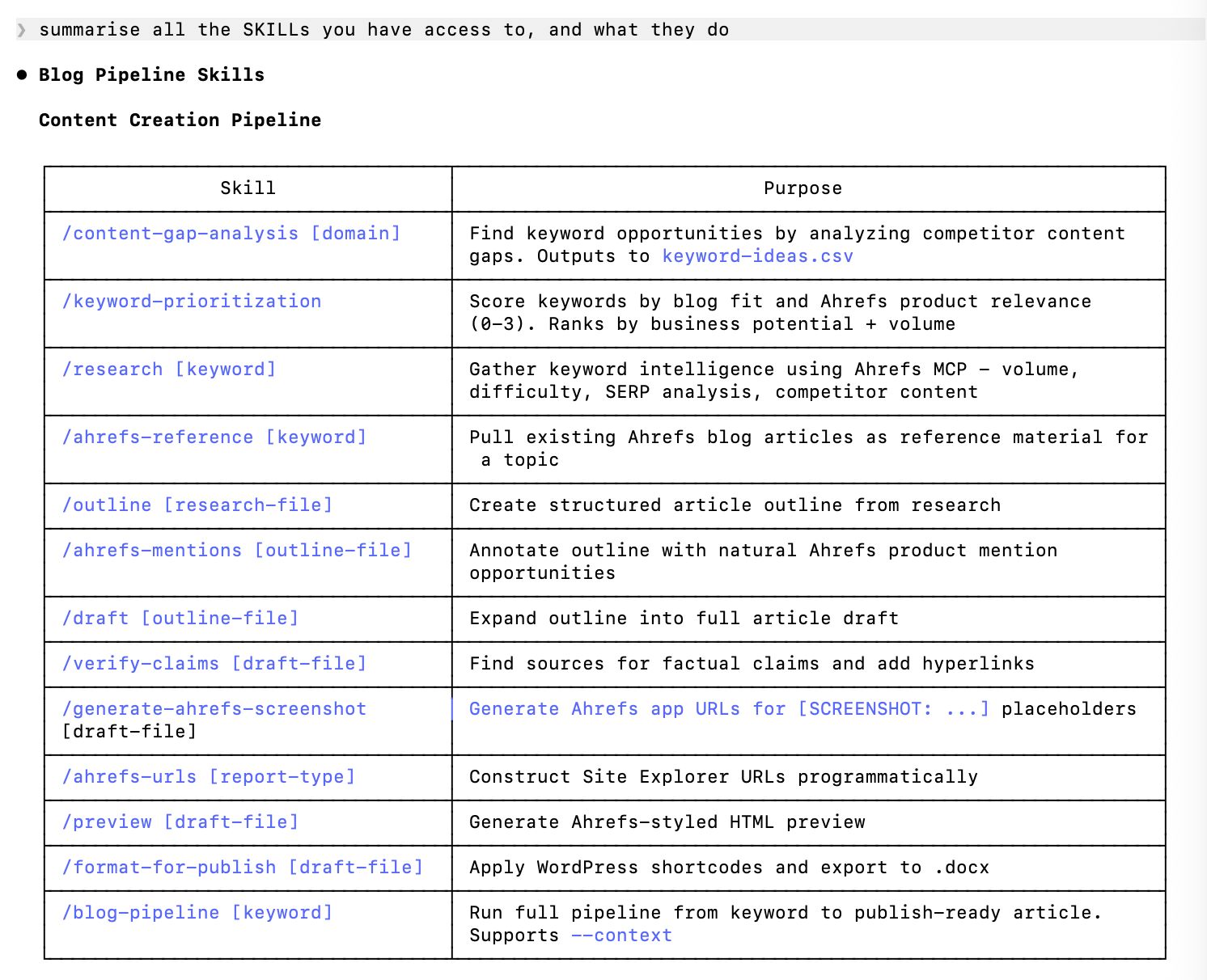

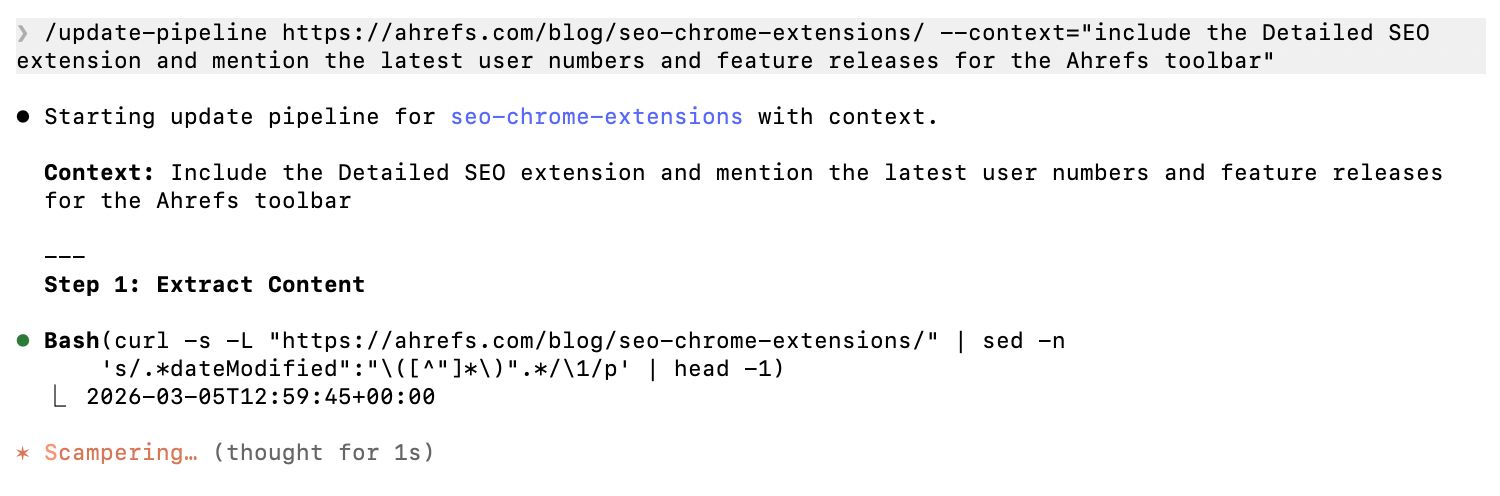

Whether I have persuaded you or not, at the time of writing, there are significant parts of my role I have already outsourced to generative AI. I use Claude Code, the Ahrefs MCP, and a series of ~15 custom SKILLs, chained together in sequence, to update old articles and create helpful, high-quality content.

Claude embarks on its content updating process.

These articles sound the same. They perform the same. They include my experience and perspective. They are as good as anything I could have written; better, because I wouldn’t have had the time to create them otherwise. There is no trade-off.

There is still a vast gulf in the quality possible between a skilled writer using generative AI to its fullest potential, and the average layperson prompting ChatGPT to “write a blog post”.

But this gulf is far smaller than it used to be; in the long-term, it will close, as AI platforms continue to democratize access to all of this amazing functionality. The skills of the “content engineer” will become just another workflow in every major LLM platform. Functionally “perfect” AI content is just around the corner, for all of us.

I am comfortable making this argument because there are many parts of my job that I still cannot outsource to AI; there are others I would not, even if I could (like this article).

The path forward will only be found if we are honest about where AI can, and should, be used. Until recently, AI content wasn’t good enough. Now, it is. The sooner we can admit that, the more time we have to focus on the parts of marketing where humans will have a longer, happier tenure.

(And I do not miss writing skyscraper content.)